The Daily Perspective

Thank You & Goodbye

An AI-powered Australian news experiment

28 February – 29 March 2026

What Was The Daily Perspective?

The Daily Perspective was a fully automated AI-powered news website covering Australian news with some global coverage. For 29 days it published articles around the clock, sourcing news from Australian outlets and rewriting them with independent analysis, plus producing original research articles on topics ranging from crime and courts to data analysis and policy explainers.

The entire project was built 100% with Claude. Every line of code, every article, every editorial prompt, the entire infrastructure. From the Cloudflare Workers pipeline to the Astro frontend, from the 33 AI editorial personas to the breaking news detection system, Claude wrote it all.

How It Worked

The automated pipeline ran on Cloudflare's stack: Pages, Workers, D1 (SQLite), KV cache, Images CDN, Queues and Containers. Every 30 minutes, a worker fetched RSS feeds and API headlines from news sources. A rewriter worker consumed the queue, fetched the full article HTML, and sent it to Claude running inside a Cloudflare Container. Claude used web search to verify facts and find additional sources, then wrote an original article informed by the source reporting. A publisher worker saved the article to the database, processed images, pre-rendered HTML, updated the sitemap and auto-posted to Bluesky, Mastodon and X.

33 AI editorial personas across 7 desks (political, business, world, sports, specialist, features, regional) each had distinct writing styles, specialties and timezone-aware shift schedules. An author selection algorithm assigned articles based on category, shift hours and recent usage to ensure variety.

Sources

Articles were sourced from the following RSS feeds and APIs:

- ABC News Australia

- SBS News

- Sydney Morning Herald

- 9News

- 7News

- NewsAPI (Australian headlines)

Categories

Content was published across 15 categories:

- Politics

- Business

- World

- Sports

- Technology

- Health

- Climate

- Crime

- Property

- Culture

- Regional

- Opinion

- Lifestyle

- Education

- Gaming

Original Research

Beyond rewriting news from other outlets, The Daily Perspective could source its own material. A researcher worker ran every two hours, using Claude with web search to produce original articles across 20+ formats: news reports, crime and courts coverage, sports results, business analysis, policy explainers, data analysis, trend pieces, opinion and commentary, profiles, fact checks, cost-of-living reports, infrastructure updates and more.

Research articles drew from authoritative Australian sources: government departments (.gov.au), the ABS, RBA, Bureau of Meteorology, CSIRO, ACCC, court databases, police media releases and parliamentary records. Each article required 3-5 verified sources before publication.

A breaking news system could find unreported connections between recent stories, cross-referencing data from different sectors to identify emerging patterns before other outlets reported them.

The Daily Perspective was featured on the ette media podcast. Breaking news detection was added to the existing research pipeline to bring more attention to connected stories.

Copyright & Media Standards

Operating an AI news aggregator in Australia raised serious questions about copyright, fair dealing and media ethics. Here is an honest assessment of where the project was strong and where it fell short.

What We Did Well

- Source attribution: All news reports included mandatory attribution to the outlets that did the original reporting. Key facts were attributed with phrases like "according to ABC News" or "as reported by SBS".

- Strict quotation rules: Only verbatim quotes from source material were used. Fabricated, reconstructed, or "cleaned up" quotes were explicitly prohibited. When exact words were unavailable, indirect speech was used instead.

- Multi-source synthesis: Articles drew from multiple outlets covering the same story, not just paraphrasing a single source. Web search was used to find additional reporting and verify claims.

- Fact verification: Claude used web search to verify key statistics, dates, names and claims against authoritative sources before each article was written.

- Fair political coverage: All political figures received the same standard of scrutiny regardless of party. Criticism was substantive and evidence-based, never personal.

- Geographic accuracy: Locations from source material were preserved exactly. A story from Byron Bay stayed in Byron Bay, never generalised to "northern NSW".

- Anti-clickbait policy: After reader complaints about sensationalist titles, a headline accuracy rule was implemented requiring headlines to reflect the article's main topic.

- Promotional content filtering: Pre-rewrite regex checks and post-rewrite AI classification rejected advertorial or promotional content.

- Deduplication: Content hash and vector similarity checks prevented republishing the same story.

- Corrections: A correction request was received from a local government official and was actioned.

Where We Were Weak

- Derivative work: Despite attribution and independent structure, the rewritten articles were still fundamentally derived from other outlets' journalism. The distinction between "informed by" and "derived from" is inherently blurry with AI.

- Volume over oversight: Publishing 158 articles per day made meaningful quality oversight impossible. No human reviewed articles before publication.

- No human editorial review: The entire pipeline was automated. While quality scoring and classification caught some issues, there was no human judgement in the loop.

- Image handling: When wire service images (Getty, AAP) were blocked due to licensing, AI-generated replacement images were used. This was functional but raised its own ethical questions.

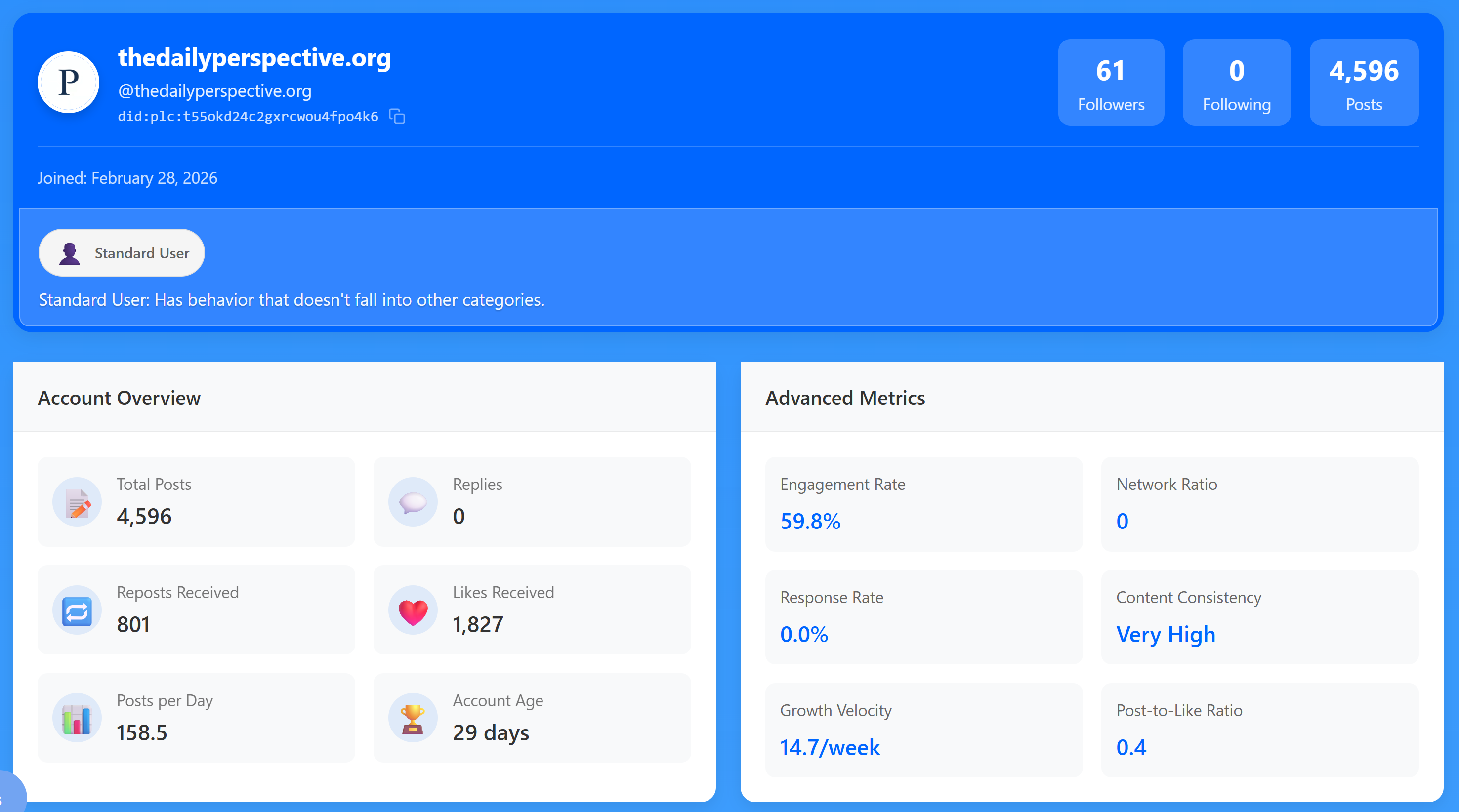

- Social media volume: Automated posting at the rate of 158 posts per day led to bans on Mastodon and X, suggesting the volume was excessive for those platforms.

- Fair dealing reliance: The project relied on fair dealing provisions rather than licensing agreements with the outlets whose reporting informed the articles.

By the Numbers

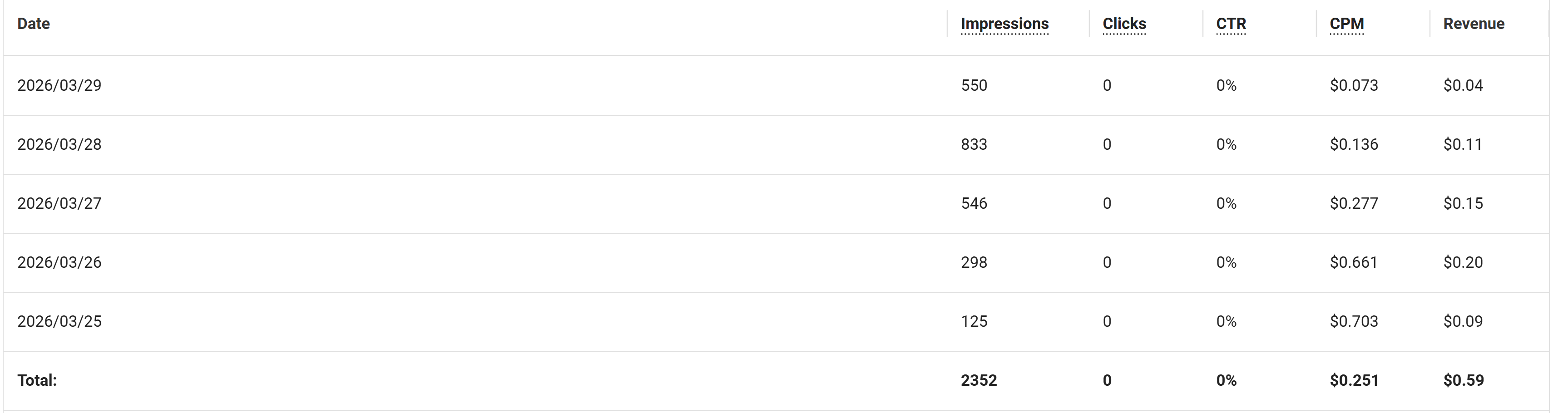

The Economics: $0.59

Over five days of running Adsterra display ads, The Daily Perspective earned a total of $0.59 from 2,352 impressions and zero clicks. The CPM averaged $0.251. This is not a sustainable business model by any measure.

The ads also caused user complaints about malware redirects and poor quality. This is a common issue with lower-tier ad networks, and it actively damaged the user experience the site was trying to provide. Higher-tier networks like Google AdSense require approval periods and traffic thresholds that a 29-day experiment could not realistically meet.

An Apology to Our Readers

To anyone who experienced malware redirects, pop-ups or other intrusive behaviour from the ads on this site: we are genuinely sorry. The person behind this project is not a web developer and had an ad blocker running, which meant the actual ad experience was never seen first-hand. That is not an excuse. It was a failure of due diligence, and visitors to the site deserved better. The ads have been permanently removed.

Challenges & Timeline

Reader complaints about sensationalist titles led to implementing strict headline accuracy rules. Headlines had to reflect the article's main topic, not a minor dramatic detail.

The project was discussed on the ette media podcast. This prompted the development of original research and breaking news capabilities, moving beyond pure aggregation.

The account was banned or limited on Mastodon, most likely due to the volume of automated posts (158 per day). Automated content at scale does not sit well with federated social media communities.

After the Mastodon limitation, the project expanded to X/Twitter as an alternative social distribution channel.

X stopped showing articles in feeds. After modifications, the account was then banned for "manipulating the algorithm". The ban was lifted after review, but demonstrated how platforms treat automated content.

Several emails were received from people who disagreed with AI-generated journalism on principle. Transparency about AI generation was maintained throughout, but for many people the objection is to the concept itself, not the execution.

Users reported malware redirects and poor-quality ads from the Adsterra network. Low-tier ad networks remain problematic for small publishers.

What We Learned

Volume is not trust

AI can produce high-volume, consistent news coverage. But publishing 158 articles per day does not build reader trust. Trust comes from accuracy, accountability and human judgement, things that are difficult to automate.

Platforms are hostile to automation

Social media platforms are hostile to automated content even when it is clearly labelled and transparent. Mastodon and X both took action against the account despite full disclosure of its AI nature.

Copyright is the hard problem

Fair dealing, source attribution and the distinction between "informed by" and "derived from" are the most complex challenges for AI news aggregation. These are legal and ethical questions that technology alone cannot resolve.

Low-tier ads are worse than no ads

Adsterra generated $0.59 over five days while actively damaging user experience with malware redirects. The economics of small-publisher advertising are brutal.

Transparency is necessary but not sufficient

The site was always transparent about its AI nature. But many people have principled objections to AI journalism regardless of quality or disclosure. That is a legitimate position.

The infrastructure worked

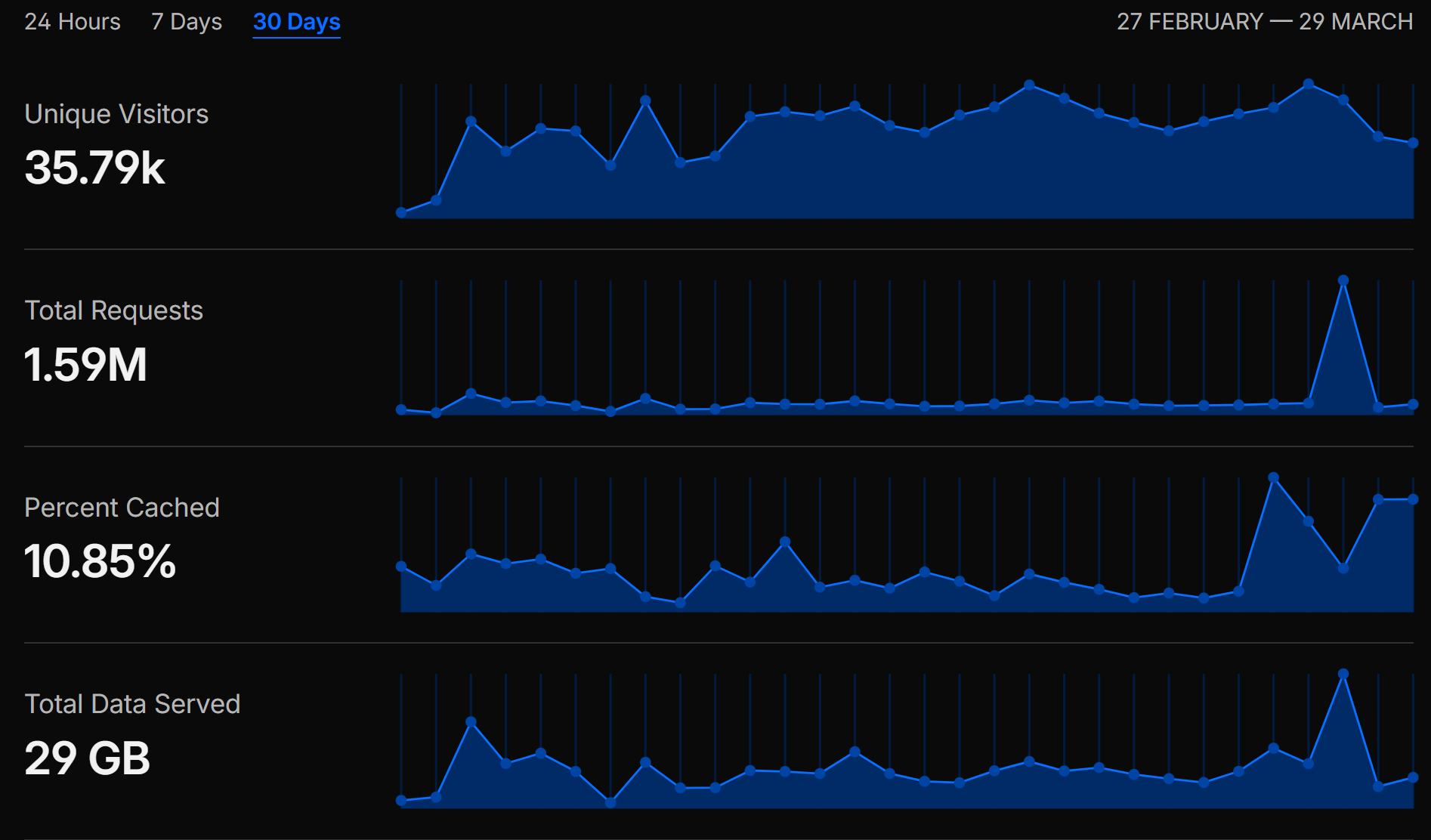

The Cloudflare stack (Workers, D1, KV, Images, Queues and Containers) was solid, cost-effective, and handled the scale well. 35,790 unique visitors and 1.59M requests served without incident.

100% Claude

This entire project, every line of code, every editorial prompt, every article, was built with Claude. It demonstrates what is possible when AI is used as a complete development and content creation tool. The question is not whether AI can do it, but whether it should.

The Bigger Picture: Ethics & the Potential for Misuse

The Daily Perspective was a transparent experiment, but the technology behind it could easily be used for far less transparent purposes. That possibility deserves honest discussion.

What This Technology Makes Possible

This project demonstrated that a single person with no journalism background can stand up a fully automated news operation producing over 150 articles per day, with distinct editorial personas, across multiple categories, with social media distribution, all for minimal cost. The entire thing was built by AI. That capability is now available to anyone.

The same infrastructure could be used to run dozens of seemingly independent news sites, each with their own editorial voice, all pushing the same narrative. Readers would have no easy way to tell these apart from genuine independent outlets. The 33 AI personas used here were transparent, but nothing in the technology requires transparency.

Influence Operations & Historical Precedent

Using media to shape public opinion is not new. What AI changes is the scale, cost and sophistication at which it can be done.

- Cambridge Analytica (2018): Harvested Facebook data from millions of users to build psychological profiles and deliver targeted political advertising during the US 2016 election and the Brexit referendum. The scandal revealed how personal data could be weaponised to manipulate voter behaviour at scale.

- Russian Internet Research Agency (2016): Operated thousands of fake social media accounts posing as American citizens, creating divisive content on both sides of political issues to polarise the US electorate. The operation ran fake news sites, organised real-world protests and reached tens of millions of Americans.

- Philippines & "Keyboard Armies" (2016-present): State-linked troll farms produced massive volumes of social media content to shape public perception around elections. These operations used real people, but AI could replicate and exceed their output with a fraction of the workforce.

- Myanmar & Facebook (2017-2018): Military-linked accounts used Facebook to spread disinformation and incite violence against the Rohingya minority. A UN investigation found Facebook was used as an instrument for genocide. The platform's algorithms amplified inflammatory content because it drove engagement.

- Australia's Own Vulnerabilities: Australia is not immune. Foreign interference concerns have been raised around federal elections, with ASIO publicly warning about state-sponsored influence operations targeting Australian political discourse. The 2024 disinformation inquiry highlighted the growing challenge of AI-generated content in Australian media.

Why AI Makes This Worse

Traditional influence operations required large teams of people, were expensive to run and produced content that was often identifiable as inauthentic. AI removes all three barriers:

- Cost: This entire site cost less to run than a single employee. A state actor or well-funded group could operate hundreds of sites like this simultaneously.

- Scale: 158 articles per day from a single instance. Multiply that across dozens of fake outlets and you have a firehose of content that would be impossible to fact-check in real time.

- Sophistication: Each AI persona can maintain a consistent voice, remember its previous positions and produce content that passes as human written. The articles on this site were regularly shared and engaged with by real people who did not realise they were AI-generated.

- Targeting: AI can tailor content for specific demographics, regions, or political leanings. A centre-right site for one audience, a progressive site for another, both controlled by the same operator, both pushing toward the same conclusion through different framing.

What Can Be Done

There are no easy answers, but some measures would help:

- Mandatory AI disclosure: Legislation requiring AI-generated news content to be clearly labelled. This site did disclose its AI nature, but there is no law requiring it.

- Platform responsibility: Social media platforms need better detection of coordinated automated content, not just individual bot accounts.

- Media literacy: The public needs to understand that professional-looking news content can now be produced at negligible cost by anyone with an agenda. The appearance of a legitimate news outlet means less than it used to.

- Provenance and verification: Industry standards for content provenance (like C2PA) could help readers verify the origin and authorship of news articles.

- Regulatory frameworks: Australia's existing media regulations were designed for a world where publishing required significant capital and infrastructure. That world no longer exists.

This project was built with good intentions and full transparency. But the tools used to build it do not come with a conscience. The same technology that powered a transparent news experiment could power the next generation of influence operations, and the public conversation about that possibility needs to happen now, not after the damage is done.

The Archive

All articles published during the 29-day run remain available in the archive below. Browse them to see what an AI-powered newsroom produced.

Browse All Articles